The U.S. of A-Bomb: How American Nuclear Weapons Changed the Course of Human History

You're free to republish or share any of our articles (either in part or in full), which are licensed under a Creative Commons Attribution 4.0 International License. Our only requirement is that you give Ammo.com appropriate credit by linking to the original article. Spread the word; knowledge is power!

The United States of America can take pride in a number of things, among them arguably the two greatest cultural and scientific achievements of human history: The moon landing and atomic power. It is the latter that we will focus on in the article, the unleashing of the power of the atom, for good and for ill.

The United States of America can take pride in a number of things, among them arguably the two greatest cultural and scientific achievements of human history: The moon landing and atomic power. It is the latter that we will focus on in the article, the unleashing of the power of the atom, for good and for ill.

America was the first nation to split the atom and applied it immediately to the war effort. It was not for a lack of trying on the part of America’s rivals: Germany famously had their own nuclear program. Less well known is that the Empire of Japan was also looking for a way to weaponize the primal forces of nature.

But America got there first. And their ability to do so not only changed the course of the Second World War, it also changed the course of human history. For the first time ever, mankind has the ability to wipe away human life as we know it at the push of a button. On the other hand, we also have a clean, reliable fuel source that could outstrip all existing sources, if the political will were there.

This is the story of how America unleashed and harnessed the power of nuclear fission, for better or for worse.

Pre-History of the Atomic Bomb: Physics and Fascism

To understand how the United States acquired the bomb, it is first necessary to briefly explain how man’s physical view of the cosmos was revolutionized by the new physics. In the early 20th Century, Pierre and Marie Curie observed radium for the first time and noticed that certain substances were highly radioactive.

To understand how the United States acquired the bomb, it is first necessary to briefly explain how man’s physical view of the cosmos was revolutionized by the new physics. In the early 20th Century, Pierre and Marie Curie observed radium for the first time and noticed that certain substances were highly radioactive.

Scientists and laymen alike began to believe that atoms contained massive amounts of energy just waiting to be harnessed. H.G. Wells published his novel, The World Set Free, about atomic warfare, in 1914. In 1924, Winston Churchill speculated about the destructive power of nuclear fission.

A little later, the top physicists of Germany were leaving in droves because of the rise to power of Hitler and the Nazis. By the time the Nazis invaded Poland in 1939, many of the top scientists had left the continent entirely, most for the United States, but some also for Canada. What this meant was that the best nuclear physicists in the world were concentrated in North America by the end of the 1930s, leaving Germany with a serious drought.

Still, it was the Germans who first discovered nuclear fission in 1938, at the Kaiser Wilhelm Society for Chemistry. Otto Hahn was the first person to discover the process, which made a nuclear weapon theoretically possible. Once nuclear fission was discovered and the applications of such a discovery extrapolated by the major powers of the time, the race was on to see who would be the first to unlock the power of the atom for military purposes.

The Also-Rans: Germany and Japan

By all accounts, Germany should have had the first nuclear weapon. They, after all, were the first to discover nuclear fission, though the atom was first split by Englishman Ernest Rutherford at Manchester University in 1911. However, as stated above, most of the top scientists of the country were leaving because of the Nazi regime and its hostility toward Jews.

The Nazis were also tripped up because they disbanded their original team pursuing the secrets of the atom, the Uranverein (Uranium Society). The group was formed in April of 1939, but disbanded in August of the same year. Some of the most brilliant scientists left in Germany were removed from the project and sent off to regular military duty. There were a couple other German projects in 1939, but nothing centralized like later projects, which were much closer to the Manhattan Project.

The second Uranverein was formed on the same day that the Second World War began in 1939. The initial report from the group was so pessimistic that they concealed their results from Hitler for two weeks and even then only casually mentioned it: It would probably take five years to get an atomic bomb and the Americans would likely be able to develop one sooner. With this, the atomic weapons project was not given the attention it would have been given if the Germans believed they could have developed a weapon first.

In 1942, the project was scaled back even further, as it was not believed that the group’s efforts would be decisive in ending the war. The atomic weapons project was effectively ended, with Albert Speer putting the bulk of the work toward the production of nuclear energy rather than nuclear weapons. It was around this time that the atomic bomb project was shelved entirely. Speer believed that it would have taken all of Germany’s resources to produce an atomic bomb by 1947.

Japan actually had a more robust and advanced nuclear weapons program, which was ultimately scuttled for a historically ironic reason: The Japanese didn’t believe anyone else had the imagination to see how atomic power could be used as a weapon. Indeed, they singled out the American government as not being able to grasp how nuclear fission could be used in warfare. The project was scrapped in favor of more work on radar.

Curiously, Japan is sometimes counted as a nuclear power today. A term called “nuclear latency” means that Japan has all the know-how and material to produce an atomic weapon very quickly if they choose to do so. It is widely believed that if Japan had the will, it could produce an atomic weapon inside of a year. Indeed, this is sometimes subject of internal debate in the country, which is constitutionally prohibited from making offensive weapons, but has sought to find ways to define tactical nuclear weapons as defensive – especially as China has become more militarized and aggressive.

The Pre-History of the Manhattan Project

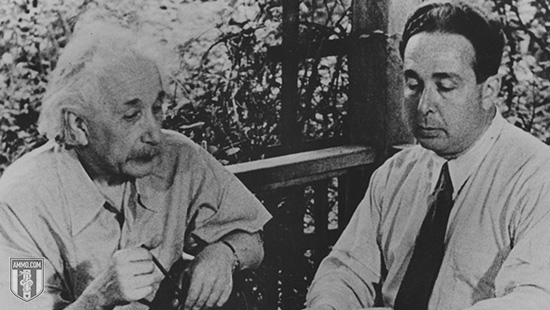

The Einstein–Szilárd letter was drafted by Leó Szilárd in 1939. Albert Einstein signed off on it. The gist of the letter was that Germany was attempting to develop an atomic bomb. It urged America to begin stockpiling uranium ore and to expend more resources studying atomic chain reactions.

The Einstein–Szilárd letter was drafted by Leó Szilárd in 1939. Albert Einstein signed off on it. The gist of the letter was that Germany was attempting to develop an atomic bomb. It urged America to begin stockpiling uranium ore and to expend more resources studying atomic chain reactions.

Roosevelt was extremely interested in the letter. Despite the fact that the United States was not yet a belligerent in the Second World War, he ordered the creation of the Advisory Committee on Uranium. Army Lieutenant Colonel Keith F. Adamson authorized $6,000 (over $100,000 in 2020 dollars) to buy graphite and uranium for Enrico Fermi and Leó Szilárd to use toward their experiments.

They were not able to achieve the chain reaction necessary to create atomic energy of any kind, let alone a weapon.

The program went through a couple of different permutations and even absorbed the information of a previous, more aggressive and more advanced British program of a similar kind. In June 1939, they discovered the critical mass of uranium needed for such a chain reaction – 22 pounds. This could easily be carried by the existing bombers of that time period.

On October 9, 1941, President Franklin Delano Roosevelt ordered the creation of a robust, permanent and dedicated atomic program steered by his Top Policy Group. Roosevelt was a part of this group along with Vice President Henry Wallace, Secretary of War Henry L. Stimson, and the Chief of Staff of the Army, General George C. Marshall. Roosevelt prioritized the Army over the Navy because he had more faith in their ability to run large-scale projects.

The Manhattan Project Begins

Originally “Manhattan” was only the name of one of the districts where the atomic work was happening. However, over time it became the code name for the entire project, which eventually involved over 130,000 workers and cost about $2 billion ($28 billion in 2020 dollars). Over 90 percent of that was spent on factories and the production of the fissionable materials that were a prerequisite for building a weapon. Less than 10 percent of the budget was spent on the actual weapon itself.

The cost associated with the development of uranium was so significant because of the technologies of the 1940s. Enriching uranium was difficult and costly, with some researchers at the time estimating that it would take 27,000 years to produce a single gram of the correct type of uranium necessary, when kilograms were what the project was calling for. The Manhattan Project eventually got around this by effectively learning how to get much more bang for their thermonuclear buck. They switched to a different bomb design that required significantly less uranium by compounding the explosive power of what they had.

The Manhattan Project is perhaps the most intensive human labor effort in history, undertaken by the ascendant world economic power during the biggest war in human history. The War Production Board gave the project the highest priority rating in 1942, a luxury that the United States was easily able to afford because of its place in the world economy and its relatively late entry into the Second World War.

While the Project is generally associated with Los Alamos (known as Site Y), there were no fewer than 20 different sites where the Manhattan Project was up and running. Indeed, the first nuclear chain reaction that reached critical mass took place not in a New Mexico desert, but in a University of Chicago laboratory.

Originally, the Manhattan Project was working on three different types of nuclear weapons: The Thin Man (a gun-type fission plutonium weapon), the Fat Man (an implosion-type nuclear weapon) and the Little Boy (a gun-type fission uranium weapon). Of these, the first was abandoned in July 1944, when researchers realized that it would probably not work properly, with the Fat Man prioritized while production on the Little Man continued.

There was a 100-ton test explosion on V-E Day, May 7, 1945, at the Trinity Site outside of Bingham, New Mexico – but the real first atomic test took place on July 16, 1945. Codenamed “Trinity” by Robert Oppenheimer, the test was planned because the team wasn’t sure that the weapon would work and, if it did, they weren’t entirely sure what it would do upon detonation. The weapon was nicknamed “The Gadget” and was basically the same design as the Fat Man. In the event of failure, Lieutenant General Leslie Groves would have had to explain the loss of a billion dollars of plutonium to the Senate.

On August 6, 1945, the B-29 bomber Enola Gay dropped Little Boy on the city of Hiroshima. Three days later, the B-29 Bockscar dropped the Fat Man implosion-type plutonium weapon on the city of Nagasaki, the secondary target. Its original primary target, Kokura, was too heavily covered in clouds and smoke.

On August 14, 1945, Japan surrendered to the Allied Powers.

The Manhattan District itself was abolished August 15, 1947.

Invading Japan?

Other than copious amounts of nuclear weapons testing, the bomb has only ever been dropped twice, both times by the United States on Japan. There is reason to believe that the United States threatened the Empire of Japan with nuclear destruction before dropping the bomb: The Potsdam Declaration issued an ultimatum to Japan that if it did not surrender unconditionally that it would face "prompt and utter destruction.”

Other than copious amounts of nuclear weapons testing, the bomb has only ever been dropped twice, both times by the United States on Japan. There is reason to believe that the United States threatened the Empire of Japan with nuclear destruction before dropping the bomb: The Potsdam Declaration issued an ultimatum to Japan that if it did not surrender unconditionally that it would face "prompt and utter destruction.”

The bombings killed between 129,000 and 226,000 people, most of whom were civilians. This does not include the number of civilians killed due to the radiation from the bomb.

It is appropriate to consider this one of the most inhumane attacks in the history of war. However, it is equally appropriate to weigh the atomic bombing of Japan against the planned invasion of Japan, known as Operation Downfall. It’s easy to Monday-morning quarterback the decision to drop the bomb, but to fully understand the “why” requires stepping into the shoes of the men making the decisions. It does not require giving them a pass.

Few Americans at the time even spent a lot of time thinking about the Okinawa Campaign, despite the fact that it left over 100,000 men dead on both sides. FDR died in the middle of it and the Germans surrendered, so the Okinawa Campaign, despite its carnage, got lost in the shuffle.

The lasting impression on the American public was a taste for extra-conventional means of waging the war to save American lives, not limited to napalm and carpet bombing. This wasn’t just due to the high casualties, but also the Japanese methods of war, including kamikazes and banzai charges, each of which were methods of weaponizing suicide. This resulted in the Western forces becoming far, far more aggressive with picket destroyers and flame-throwing tanks. Air Force General Curtis LeMay, who later ran for Vice President on a platform of using nukes against North Vietnam, was more than willing to oblige these more brutal methods.

There was a plan for invading the Japanese mainland islands, going back before the surrender of Nazi Germany. Known as Operation Downfall, stealth was not an attribute: The geography of Japan made the general outline of the invasion readily known to the Japanese government.

Operation Downfall, scheduled for November 1945, was divided into two parts. Operation Olympic was a series of Army landings designed to capture the southern third of the third-largest of the Japanese mainland islands, Kyūshū. The second, Operation Coronet was aimed at capturing the Kantō Plain on the main Japanese island of Honshū. This Allied invasion would consist of American forces and a combined Commonwealth Corps. The Soviets had not yet declared war on Japan and the Chinese were in no position to help invade the Japanese mainland. Japanese defensive plan Operation Ketsugō aimed at an all-out defense of Kyūshū.

If carried out, this would have been the largest amphibious invasion in history.

The Japanese had 2.3 million Japanese Army troops at their disposal, aided by a 28 million strong national civilian militia. The Vice Chief of the Imperial Japanese Navy General Staff, Vice Admiral Takijirō Ōnishi, predicted the number of Japanese deaths at 20 million, a staggering number. The U.S. Joint Chiefs of Staff predicted between 25,000 and 46,000 Americans dead. University of Chicago political science professor Philip Quincy Wright and American physicist William Shockley, using information from Colonels James McCormack and Dean Rusk, as well as cardiac surgeon Michael E. DeBakey, envisioned a far more grim result for the Allies: 400,000 and 800,000 Allied dead, with Japanese fatalities between 5 and 10 million.

The first plan to mitigate the casualties was not atomic weapons, but chemical ones, with phosgene, mustard gas, tear gas and cyanogen chloride moved into the theater. Biological weapons were also considered on the table.

There was also the massive bombing campaign to both weaken the military and soften civilian resolve in the island in advance of the invasion. All told, 67 cities were firebombed by LeMay. This absolutely devastated the six largest cities of Japan, as well as destroyed many of Japan’s so-called “paper cities,” which were still largely constructed with, as the name would imply, paper. And the disturbing fact is that LeMay still might have saved more lives than would have been lost on both sides from a land invasion of the homeland.

Dropping the Bomb: Hiroshima

As everyone reading this knows, America eventually decided to drop its two remaining atomic bombs on Japan. The criteria for selecting a target was that the target had to be larger than three miles and needed to be in a major industrial city, that the blast would create significant damage, and that the city was unlikely to be attacked before August 1945. There were five cities selected as potential primary targets:

- Kokura, now known as Kitakyushu, housed one of Japan's largest munitions plants.

- Hiroshima, an industrial center and port of embarkation that remained the site of a major military headquarters, even in 1945.

- Yokohama, an urban population center where aircraft, machine tools and electrical equipment were manufactured alongside docks and oil refineries

- Niigata, a port city where steel was manufactured.

- Kyoto, another major center of industrial production. This was ultimately spared only because Henry L. Stimson fell in love with the city while there on his honeymoon.

Top brass originally considered merely demonstrating the device where the Japanese could see it or even warning the Japanese of the attack to give them time to surrender. However, for a variety of reasons, these were considered unfeasible and only a direct military demonstration and a surprise attack were considered to be worthwhile uses of the two remaining atomic devices. One consideration was that Allied prisoners of war might be moved to the attack area in advance of the bomb being dropped. Likewise, while propaganda leaflets had been dropped on major Japanese cities for months, the Allies decided against a special warning to the inhabitants of target cities advising them to evacuate – for many of the same reasons that they didn’t warn the Japanese government.

Even without the atomic bomb, General LeMay was already planning a much more brutal campaign of bombing. He was looking to build a fleet of 5,000 B-29s and another 5,000 B-24s and B-17s as backup. This would be a rain of napalm from the sky coming from over 10,000 bombers stationed on Okinawa, something that would have been far more destructive than both atomic bombings. LeMay’s goal was to break the Japanese as much as possible before American ground troops arrived, saving American lives.

On August 6, 1945, Hiroshima became the primary target of Enola Gay and its Little Boy bomb, with Kokura and Nagasaki as the alternative targets. It was accompanied by two other B-29 bombers, The Great Artiste and Necessary Evil, the latter of which was unnamed at the time. The bomb was dropped at 8:15 a.m. Hiroshima time, falling for 44.4 seconds and detonating about 1900 feet directly above the Shima Surgical Clinic, approximately 800 feet from its intended target thanks to crosswinds.

The detonation was the equivalent of 16 kilotons of TNT, creating a one-mile radius of destruction, with fires spreading across 4.4 square miles. It was considered very inefficient, with only 1.7 percent of the material actually undergoing nuclear fission. The Enola Gay spent two minutes over the target area, but was 10 miles away when the bomb detonated. The crew was 11.5 miles away when they felt the shockwave. Only three onboard the Enola Gay knew what they were carrying. The other men were told only to expect a blinding flash and given safety goggles.

Approximately 30 percent of Hiroshima’s population were killed in the blast, or the resulting fires. Approximately 20,000 Japanese military personnel were killed and 4.7 square miles of the city were destroyed or about 67 percent of the city’s buildings, with another 7 percent damaged. 90 percent of the doctors and 93 percent of the nurses in the city were killed in the bombing.

Japan literally didn’t know what had hit it until 16 hours later when President Truman announced the attack. The speech threatened further destruction and land invasion if the Japanese did not surrender. On August 7, Japanese atomic scientists arrived and confirmed that the city had been destroyed by an atomic device. The Japanese cabinet met and determined that only one or two more bombs of this type could exist, and decided to continue the war effort.

On August 8, 1945, the Soviet Union declared war on Japan. The next day it began an invasion of Manchuria. The Soviet invasion was likely a bigger factor in the Japanese surrender than either bombing.

Dropping the Bomb: Nagasaki

When it became clear that Japan would not surrender, the second bomb was readied. Originally, the bomb was to be dropped on August 11, but weather conditions forced the date to be moved forward to the 9th. The Enola Gay would once again be involved in this mission, but as a weather reconnaissance vessel. The Bockscar would have the dubious honor of dropping the bomb on Nagasaki, a major industrial city largely constructed of timber and wood.

When it became clear that Japan would not surrender, the second bomb was readied. Originally, the bomb was to be dropped on August 11, but weather conditions forced the date to be moved forward to the 9th. The Enola Gay would once again be involved in this mission, but as a weather reconnaissance vessel. The Bockscar would have the dubious honor of dropping the bomb on Nagasaki, a major industrial city largely constructed of timber and wood.

The primary target that day was Kokura, which was spared because smoke from conventional bombing had floated over the city, reducing visibility. The secondary target, Nagasaki, then became targeted. The crew were uncertain if Nagasaki would be any better of a target and did not have the fuel to go to a third, deciding that if Nagasaki didn’t work that they would dump the bomb into the ocean.

At 11:01 a.m. on August 9, 1945, the Fat Man bomb was dropped over a tennis court in Nagasaki, exploding 44 seconds later at approximately 1,650 feet above the city. This was nearly two miles from the intended target. A large section of the city was protected because the bomb struck in the Urakami Valley. The explosive force was equivalent to 21 kilotons of TNT. American bomber Big Stink saw the explosion from 100 miles away and flew closer to inspect it.

The Bockscar had to make an emergency landing on Okinawa and was running out of fuel when it made contact with the runway at 140 miles per hour.

Casualty estimates of this bombing range wildly from 22,000 to 75,000. While the bomb was far more destructive, it caused far less carnage because of where it landed in the Valley. The blast radius was one mile, with fires spreading another two miles. There was no firestorm in Nagasaki because the area did not have enough fuel to create one, making Hiroshima the last firestorm created by bombing in world history.

Two more Fat Man bombs were prepared to be deployed, with technicians working around the clock to get them ready for General LeMay. However, they were not prepared in time, sparing Sapporo, Japan from a second round of nuclear attacks on August 11 and 14.

It is worth noting that the effects of the bombing were not limited to either the initial blast or the resulting fireball. There was also an increase in the cancer rate, birth defects and brain development of children in the area.

There were 200 survivors of the Hiroshima bombing who took refuge in Nagasaki. 165 are officially recognized as having been affected by each bombing, with nine claiming they were in each blast radius. One, Tsutomu Yamaguchi, is officially recognized as a dual double hibakusha (blast affected person). He died at the age of 93 of stomach cancer.

On the flipside, Lieutenant Jacob Besser was the only man to fly on both missions.

The weapons that were used against Hiroshima and Nagasaski would now be regarded as small, tactical nuclear weapons.

The Surrender and Occupation of Japan

It is worth noting that the Japanese continued to fight, at least in theory, for a full six days after the attack on Nagasaki. The cabinet stuck to the original four demands of Japanese surrender: Keeping the Emperor on the throne, self-management of own disarmament, no occupation of Japan, and self-management of any war crimes trials.

While historians disagree on whether it was the bomb or the declaration of war by the Soviets that ended the war, it’s worth briefly considering the latter. Japanese leaders were afraid of what a Soviet occupation would look like, both in the long and the short term. However, the counter argument to this is that the threat of invasion from the United States, combined with continued aerial bombardment and a naval blockade, would probably have been enough to end the war.

Still, other historians think that the bombings had absolutely nothing to do with defeating Japan and everything to do with intimidating the Soviets, who most considered to be the inevitable next enemies in the next war. Others argue that the bombs were dropped to prevent the Soviets from gaining territory in Asia, by forcing Japan to surrender early.

Whatever one believes about why the Japanese surrendered, dropping the bomb almost certainly saved Japanese lives. What’s more, it’s difficult to believe that the occupation of Japan would have been nearly as benevolent and benign as it was had America just lost 400,000 men.

An attempted military coup d’etat failed and the Japanese surrendered, setting the stage for the American occupation. The Treaty of San Francisco was signed on September 8, 1951, normalizing relations between the United States and Japan. On April 28, 1952, the occupation ended and full sovereignty was restored to Japan. The United States continued to hold Iwo Jima until 1968, only partially abandoned Okinawa in 1972, and continues to maintain a significant presence there today.

A 2015 survey showed that 56 percent of Americans support the bombings, while only 34 percent oppose them.

American Nukes After the End of World War II: Limited vs. Total War

In 1946, Congress established the Atomic Energy Commission, headed by civilians and used to reorient nuclear power away from war toward peaceful uses of energy. America enjoyed a nuclear monopoly until August 29, 1949, when the Soviets detonated their first atomic device, using information largely gleaned from nuclear espionage of the Manhattan Project.

In 1946, Congress established the Atomic Energy Commission, headed by civilians and used to reorient nuclear power away from war toward peaceful uses of energy. America enjoyed a nuclear monopoly until August 29, 1949, when the Soviets detonated their first atomic device, using information largely gleaned from nuclear espionage of the Manhattan Project.

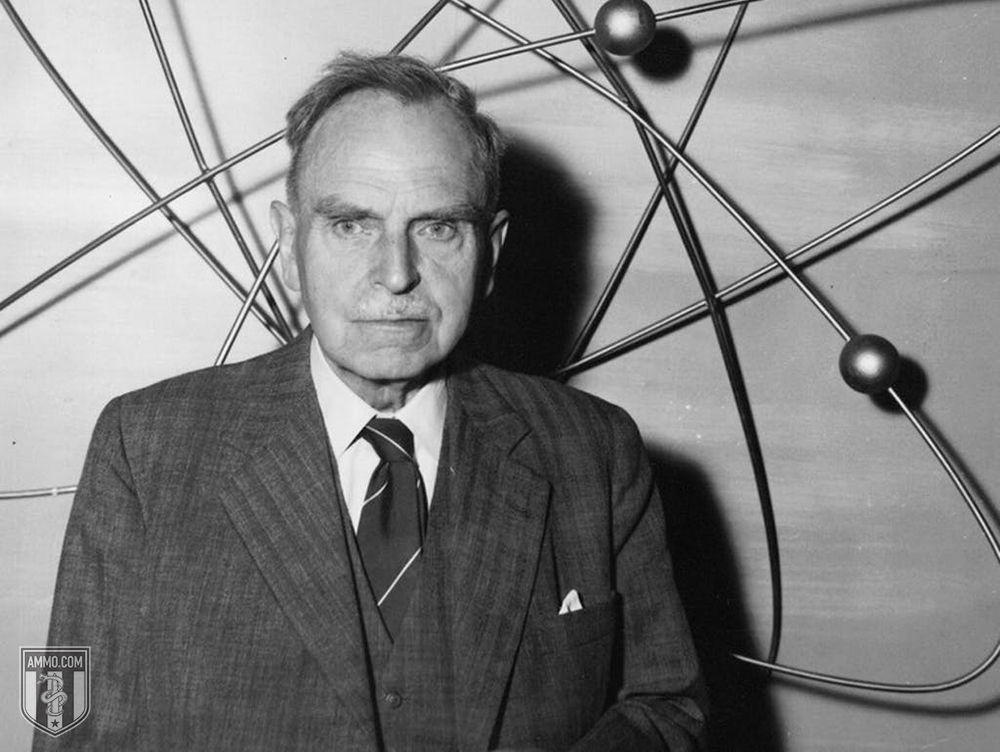

Truman responded on January 31, 1950, by ordering a headlong charge toward the development of a hydrogen fusion bomb, with a magnitude larger than anything the world had seen before. Hungarian scientist Edward Teller had speculated about this years before it was a reality, and was thus known as “the father of the hydrogen bomb” – a sobriquet he detested for the rest of his life, because he was only part of a team that made the hydrogen bomb. These are known as thermonuclear weapons. On November 1, 1952, the United States detonated its first hydrogen bomb on Elugelab Island in the Marshall Islands as part of Operation Ivy. The weapon was nicknamed “Mike.” It was 450 times as powerful as the weapon that was deployed against Nagasaki. It destroyed the island entirely, leaving an underwater crater 6240 feet wide and 164 feet deep.

The Soviets responded on August 12, 1953, with “Joe-4.” Unlike the American weapon, which was too big to actually use, the Soviet device was deliverable.

Thus began the nuclear arms race and the modern age of nuclear warfare.

In the age of fission bombs, nuclear war could be limited and, indeed, tactical. With the development of thermonuclear weapons, nuclear warfare was necessarily total. Over 70,000 total warheads were developed from 1945 to 1990. Sizes ranged from .01 kilotons (the Davy Crockett, effectively a small nuclear rocket) to the 25 megaton B-41, the only three-stage weapon that the United States ever produced. All told, the United States spent $9.49 trillion in present-day dollars on nuclear weapon development.

While the United States never actually used nukes ever again, they threatened to use them multiple times.

Truman loudly announced that nukes were in consideration against the Chinese during the Korean War in 1950, as did Eisenhower in 1953. This happened despite General Douglas MacArthur’s repeated public requests for the President to do just this. Eisenhower likewise threatened Communist China several times throughout the 1950s to ensure the continued existence of the Republic of China. As recently as 2002, the Bush Administration threatened Iraq with nukes.

In addition to threats, there have been several “close calls” where nuclear weapons were almost fired due to technological failures of miscommunications, the most recent in 1995. The most famous close call, of course, was during the Cuban Missile Crisis when the United States and the Soviet Union stood on the brink of full-throttle nuclear war for nearly two weeks.

Are Nukes Bad?

Are nukes necessarily bad? Especially the smaller ones used during World War II? The numbers cited above are stark: 250,000 Japanese dead at the high end, compared to 5 million Japanese dead at the low end in the event of an invasion. General LeMay, while acting as Governor Wallace’s running mate, touched on this calculus:

“So we go on and don't do it, and let the war go on. Over a period of 3 and a half or four years, we did burn down every town in North Korea. And every town in South Korea. And what? Killed off 20 percent of the Korean population. What I'm trying to say is, once you make a decision to use military force to solve your problem – then you ought to use it. And use an overwhelming military force. Use too much. And deliberately use too much. So that you don't make an error on the other side, and not quite have enough. And you roll over everything to start with. And you close it down just like that. You save resources. You save lives. Not only your own, but the enemies too. And the recovery is quicker. And everybody is back to peaceful existence – hopefully in a shorter period of time.”

The point is this: Barring catastrophic end to human civilization, the bomb might actually save a lot of lives because the major nasty part of war is its length. Whereas total war limits the duration, limited war prolongs the nastiness.

But the important language here is “barring catastrophic end to human civilization.” At some point, nuclear war moved from being a thing that would just kill a lot of people to a thing that might end human life on earth, or at least life as we know it. President Ronald Reagan was so shaken by The Day After that he thought about point blank telling the Soviets that he had no intention of launching a first strike, ending America’s ability to bluff.

In the final analysis, while nukes might have been able to, in the logic of war, save lives in a previous era, it would be very hard to make the case that they have been able to do so for the last 50 years or more because they’ve gotten so powerful and so plentiful. One or two low-yield bombs being dropped here and there is a tragedy on the same order of firebombings or other horrific acts of war. Hundreds of multi-megaton ICBMs flying through the air could be the end of everything, everywhere.

The United States has over 2,800 nuclear weapons as of March 1, 2019, a number fixed at rough parity with the Russian Federation by international treaty. France and the United Kingdom have a few hundred. No one knows how many India, China, Pakistan, North Korea and Israel have.

Foreign Analysis

- Democide: Understanding the State's Monopoly on Violence and the Second Amendment

- No Go Zones: A Guide to Western Failed States and European Secessionist Movements

- Land Reform and Farm Murders in South Africa: The Untold Story of the Boers and the ANC

- Venezuela and the Paradox of Plenty: A Cautionary Tale About Oil, Envy, and Demagogues

- Island Gun Laws: The History of Gun Control and Crime in Australia, New Zealand, and the UK

- The Italian Years of Lead: Could the Secret "Strategy of Tension" Foreshadow America's Future?

- The U.S. of A-Bomb: How American Nuclear Weapons Changed the Course of Human History

- The Tiananmen Square Massacre: From China's Authoritarian Roots to the Iconic "Tank Man"

- Geopolitics: How Maps Help Us Understand History, Predict the Future, and Go Beyond Politics

- The Prelude to World War II: The Spanish Civil War and Today's America

- Cultural Superiority isn't Racism: Why Western Values Underpin the World’s Best Countries

- Nationalism vs. Patriotism: What's the Difference and Why it Matters

- How Totalitarianism Rhymes Throughout History: Czechoslovakia, China, & Venezuela